10 AI Control Questions Every Senior Executive Should Be Able to Answer

10 AI control questions every senior executive should be able to answer

Every executive team is being asked to do two things at once: adopt AI faster, and prove the organisation is still in control of it.

That sounds manageable in theory. In practice, it is becoming harder by the quarter.

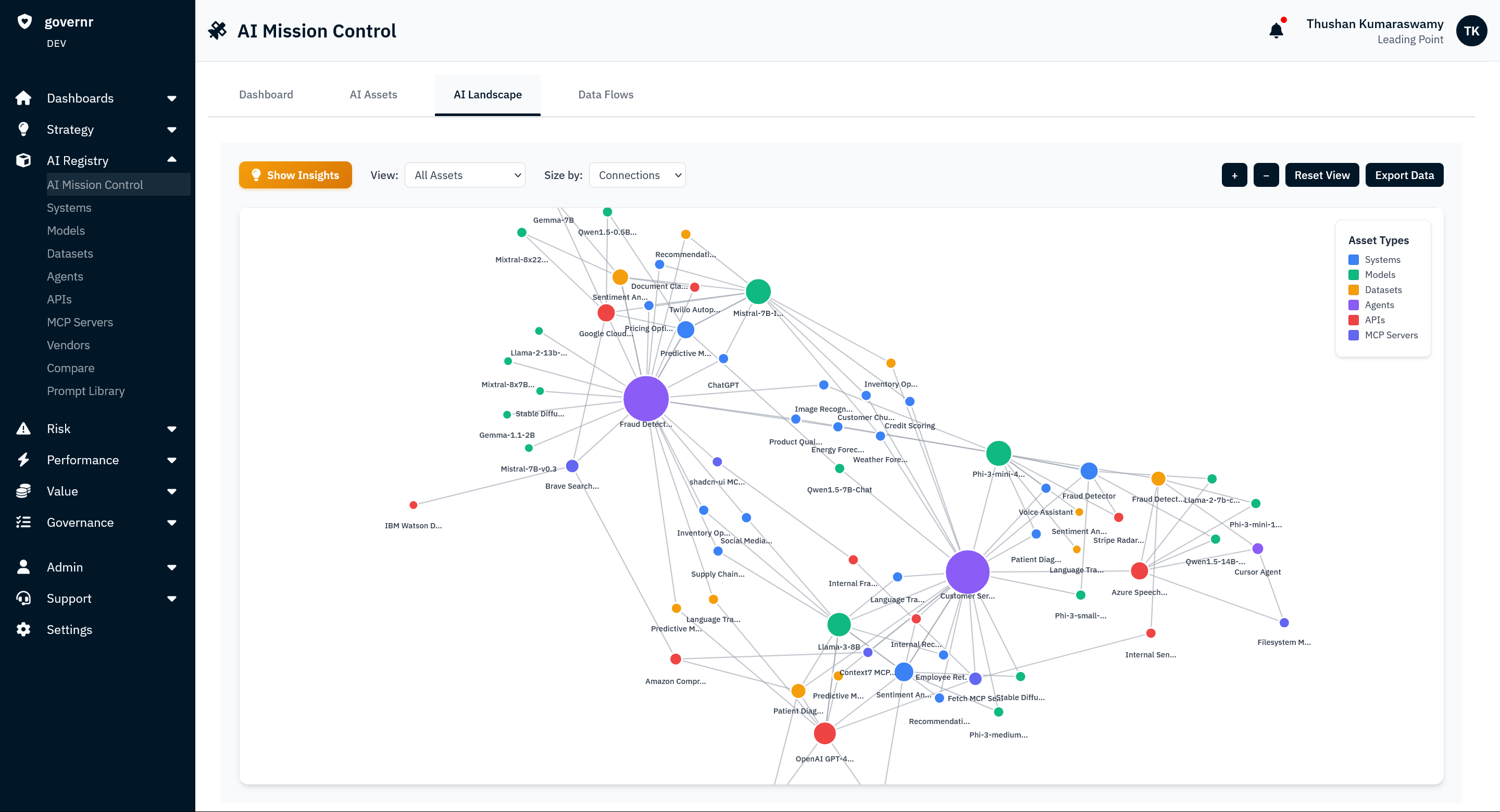

AI is spreading through the enterprise faster than most leadership teams can see it, assign it, monitor it, or explain it in business terms. It is not just the visible pilot projects or the approved internal tools. It is copilots turned on inside software you already use, model updates pushed quietly by vendors, workflow automations created by business teams, and agents connected to systems, data, and permissions that change more often than most control processes were designed to handle.

That is the real gap. What is live is growing quickly. What is actually visible, owned, monitored, and provably under control is not.

Regulation matters, but it is not the starting point. The starting point is operational control. If you cannot see your AI estate, understand what each system is doing, know what it touches, detect when it changes, and intervene when necessary, you do not have a governance model. You have scattered documentation and delayed discovery.

That is why the pressure is intensifying. Modern AI governance expectations are converging around a simple operating standard: know what AI you have, assign accountable human oversight, monitor how it is used, keep evidence, and be able to show control on demand. That pattern is visible in the EU AI Act’s requirements for human oversight, monitoring, and logging for high-risk systems, and in NIST’s AI Risk Management Framework, which emphasizes governance, mapping, measurement, and ongoing management.

Here are the 10 questions any senior executive should be able to answer, but in most organisations, still cannot.

1. How many AI systems are running in your organisation right now?

Not the approved ones. All of them.

That includes the obvious systems, but also the ones leadership often misses: copilots embedded in productivity suites, AI assistants inside CRM and service platforms, chat-based tools built by individual teams, developer wrappers around foundation models, recommendation engines added by vendors, and agents connected to email, documents, finance systems, or internal knowledge bases.

The reason this question is so hard is that AI rarely arrives as one large procurement event. It arrives as a feature update, a default setting, a plug-in, an API call, or an experiment built into a workflow that already exists. By the time leadership asks for an inventory, the organisation is usually trying to reconstruct reality from contracts, screenshots, and tribal knowledge.

If your answer takes more than a day to assemble, you do not have control. You have a discovery problem.

2. Who is the named accountable owner of each AI system?

“The technology team” is not an answer.

For every material AI system, there should be a named person who is accountable for how it is used, what risk it creates, what controls apply, and what happens if it fails. Without that, the company carries the operational and legal exposure, but no one is truly responsible for oversight in practice.

This is where liability stops being abstract. It is corporate liability because the business owns the outcome. But it can also become personal exposure for executives and senior managers when decisions, controls, approvals, and reporting cannot be tied back to a real accountable owner. The EU AI Act is one example of this direction of travel: deployers of high-risk AI systems must assign human oversight to natural persons with the necessary competence, training, and authority.

When accountability is vague, protection is weak. The organisation absorbs the risk, and leadership still gets asked who knew and who approved it.

3. What data does your AI touch, and is any of it regulated?

Knowing what AI you have is only the first layer. The harder question is the context of its use.

An internal assistant summarizing non-sensitive notes is very different from an AI workflow that can access customer communications, claims files, employee records, trading-related data, patient information, credit-relevant data, or confidential internal reports. The same model can create very different risk depending on what data it sees, what decision it influences, and what system it can act on.

This is why inventory alone is not enough. Executives need to understand not just what AI exists, but where it sits in the business, what data it touches, what users rely on it, what actions it can trigger, and whether it is being used in a regulated or high-impact context.

Without that context, organisations tend to underestimate exposure until something breaks. That risk is not hypothetical. Netskope reported that genAI-related data policy violations more than doubled over the prior year, and most incidents involved regulated data such as source code, intellectual property, passwords, and regulated personal, financial, or health data.

4. What changed in your AI estate in the last 7 days?

This is where most AI governance models start to fail.

Executives often assume that once a system has been reviewed, it remains broadly stable. AI does not work like that. Models are updated. Safety settings change. New features are added. Tools are connected. Prompts are edited by non-technical users. Agents get new permissions. Vendors quietly replace underlying capabilities without creating a new contract or a new governance process.

Public model release notes alone make the point. OpenAI’s release notes show multiple changes across 2025, including new model launches, replacements, and a rollback of an o4-mini snapshot less than a week after deployment because automated monitoring detected an increase in content flags. That is a public example from one provider. Inside an enterprise, multiply that pattern across vendors, internal tools, and business teams.

If you cannot detect material change quickly, then yesterday’s approval does not prove today’s control.

5. What is your total AI risk exposure in financial terms?

This is where many firms get stuck, because “medium risk” is not a number a business can operate against.

Boards, CFOs, insurers, auditors, and regulators do not make decisions based on vague color-coding. They work in the language of exposure, materiality, downside, resilience, and cost. If leadership cannot explain AI risk in dollar or pound terms, it becomes difficult to prioritize investment, justify controls, compare scenarios, or explain whether a deployment is proportionate to the risk.

This is not about pretending risk can be measured with false precision. It is about being able to say: if this system fails, drifts, leaks data, produces harmful output, or operates outside approved bounds, what is the plausible financial impact through remediation cost, lost business, regulatory action, customer harm, downtime, fraud, or reputational damage?

Businesses already do this for cyber, operational, model, and conduct risk. AI is no different. In fact, IBM’s 2025 Cost of a Data Breach analysis found that organisations using AI and automation extensively in security saw shorter breach lifecycles and saved an average of $1.9 million in breach costs compared with those that did not. That is exactly why exposure has to be quantified. It changes business decisions.

6. Which of your third-party vendors have introduced AI in the last 12 months, and have you assessed it?

This is one of the fastest-growing blind spots in enterprise AI.

Most organisations still think about AI as something they build or buy intentionally. In reality, much of the new risk arrives through vendors they already use. AI appears as an assistant, an embedded model, an automation feature, a scoring layer, a recommendation engine, or a workflow copilot inside products that were originally approved for a very different purpose.

That means risk can enter the business without a new procurement event, without fresh due diligence, and without any executive conversation about whether the control model still holds.

This broader pattern is why third-party and ecosystem risk is rising in parallel with AI adoption. Bridewell’s 2025 transport research found that organisations are under pressure to adapt to emerging AI-driven threats, with AI-powered phishing cited as a concern by up to 89% of respondents and automated hacking by up to 84%.

Third-party AI is not safer because someone else built it. In many firms, it is harder to see, harder to test, and easier to trust by default.

7. Can you produce an audit trail showing active human oversight of your AI, not just a policy?

A policy is not proof of control.

This is the difference between governance theater and governance in practice. Regulators, auditors, and internal risk functions are increasingly less interested in whether a policy document exists and more interested in whether someone can show review history, exceptions, interventions, approvals, escalation paths, and evidence that oversight actually happened as the system operated and changed.

That is also the direction of the EU AI Act, which requires human oversight and monitoring for high-risk systems, alongside retention of logs under deployer control.

If your principals were assigned on paper but never actually reviewed a system’s use, decisions, or changes, the organisation holds the liability without the protection that active oversight is supposed to provide.

8. Have any of your AI agents escalated privileges beyond what was originally approved?

This is where liability starts moving at machine speed.

When you employ a human, you are liable for their actions within the scope of their role. When you deploy an AI agent, that accountability does not disappear. But the scale changes completely.

A person acts one decision at a time. An agent can operate across systems, tool calls, integrations, and workflows at machine speed. That means the exposure is not just larger. It can compound far faster than traditional human supervision models were designed to handle.

The real risk is not simply that an agent exists. It is that the agent gains access over time through new integrations, delegated permissions, tool chains, connector sprawl, or privilege drift. Each individual change can look small. In combination, they can create a very different risk profile than the one leadership originally approved.

This is one reason access control and continuous monitoring are becoming central to AI control, not peripheral to it. When agents can touch more systems, make more decisions, and act faster than a person ever could, the liability surface expands with them.

9. What is your process when a model vendor is breached or changes its output?

Most incident response plans still assume the system is stable and mostly internal.

That is no longer true. If a model provider changes behaviour, suffers a breach, shifts output quality, alters an API, or updates an embedded feature, the consequences can cascade through your internal workflows quickly. What looked like a vendor issue can become your customer issue, your operations issue, or your board issue in hours.

A workable AI control model has to include explicit response playbooks for third-party AI changes, not just internal failures. That means defined triggers, ownership, fallback plans, escalation steps, and evidence trails.

If your incident response plan does not explicitly cover third-party AI behaviour change, it does not cover one of your most likely failure modes.

10. If your board asked today, could you show a regular cadence of quantified AI risk reporting?

By the time the board asks about AI, it is usually already too late for a one-off presentation.

What matters is cadence. What AI exists. What changed. Where the material exposure sits. What incidents or exceptions occurred. What management did in response. What remains unresolved. And whether the business can show, with evidence, that oversight is happening at the same speed AI is being deployed.

This is where AI governance becomes an executive control system rather than a policy topic. Boards do not need more abstract updates on “AI strategy.” They need reliable reporting on exposure, accountability, change, and response.

The pattern behind all 10 questions

None of these are trick questions.

They are the AI version of questions executives already answer in other risk domains: What is our exposure? Who owns it? What changed? What evidence do we have? How fast can we intervene? What does the board see?

The problem is that most firms still manage AI through a mix of one-time reviews, scattered documentation, procurement checklists, and static questionnaires. That model breaks when technology changes weekly, when risk enters through existing vendors, and when agents can act at a scale no human control process was built to supervise manually.

The real issue is not just knowing what AI you have. It is knowing what it is doing.

Most organisations are still trying to solve the first-order problem: what AI systems exist?

That is necessary, but not sufficient.

The harder question is contextual. Where is each system used? What data does it touch? What actions can it take? What systems is it connected to? What changed recently? What would happen if it failed, drifted, or behaved unexpectedly? How much exposure would that create, and for whom?

Inventory without context becomes a list. Context without change detection becomes stale. Change detection without accountability becomes noise. Control only starts to exist when those pieces come together: inventory, ownership, behaviour, quantified exposure, proof of oversight, and the ability to intervene.

Why the control gap is widening

This is the central problem leadership teams are now facing.

They are being told to accelerate AI adoption because the market demands it. At the same time, they are being asked to prove the organisation is still under control. The gap between those two asks is widening because AI is scaling through embedded software, internal experimentation, and connected agents faster than most governance models can keep up.

That is why this is no longer just a technology issue or a compliance issue. It is a leadership issue.

The organisations that handle this well will not be the ones with the longest policy documents. They will be the ones that can answer, in hours rather than weeks, what AI they have, where it sits, who owns it, what it touches, what changed, how much exposure it creates, and what they can do if something goes wrong.

Final takeaway

The core AI challenge for leadership is not ambition. It is control.

Executives are being asked to move faster on AI while proving the organisation remains visible, governable, and defensible as that AI spreads. Regulation increases the consequences, but the underlying problem is operational: if you cannot see your AI, understand its context, detect its changes, quantify its exposure, and intervene when needed, you are not governing it.

You are hoping it stays contained.