AI Governance as an Adoption Accelerator, not a Brake

AI Governance as an Adoption Accelerator, not a Brake

What if AI governance is the thing that helps firms say yes faster?

That is the question more organisations need to ask.

One of the most persistent myths in enterprise AI is that governance slows adoption. The logic sounds intuitive. More governance means more process. More process means more friction. More friction means slower rollout.

But that is not what happens in practice. In practice, weak governance slows AI adoption far more than strong governance does. The organisations that move fastest with AI are not usually the ones with the least oversight. They are the ones with enough visibility, control, and confidence to approve use cases without guessing. That is the real reframe.

Why poor AI governance creates hesitation

When firms lack a credible AI governance framework, every new use case becomes more difficult than it should be. Risk teams hesitate because they cannot see enough. Legal teams worry because ownership is unclear. Technology teams move ahead without confidence that the process will keep up. Business teams feel blocked because nobody can give a clear answer. Boards ask for assurance that management cannot easily evidence.

This is not a problem of too much governance. It is a problem of too little usable governance.

When the control model is weak, vague, or disconnected from how AI is actually being used, the organisation defaults to caution. Not because people are anti-innovation, but because uncertainty is difficult to underwrite.

That is why many firms end up in the same pattern:

- business teams think risk is slowing them down

- control teams feel exposed

- approvals become inconsistent

- every new AI use case turns into a bespoke negotiation

The visible friction shows up in governance. The real cause is lack of confidence.

What strong enterprise AI governance actually provides

A mature enterprise AI governance model does not just reduce risk. It creates the conditions for faster decision-making. It does that in four ways.

1. It improves visibility

If the organisation has a live view of what AI is already operating, new use cases can be assessed in context rather than in isolation.

2. It creates clearer approval pathways

When AI risk tiers, ownership, review thresholds, and control requirements are defined, fewer decisions need to be reinvented each time.

3. It gives control functions confidence

Risk, legal, compliance, and security teams are much more willing to support adoption when they trust the underlying control model.

4. It gives leadership a defensible posture

Executives and boards are far more likely to support scaled AI adoption when they are not relying on fragmented information and informal reassurance.

This is what good governance really produces: confidence. And confidence is what speeds adoption.

The wrong binary: innovation versus control

Too many AI discussions still frame the issue as innovation versus governance. That is the wrong binary. The real choice is between:

- uncontrolled adoption, where AI spreads faster than the organisation can understand or supervise it

- governed adoption, where the organisation can move faster because the operating model is clear

The first path may feel faster at the beginning. It avoids friction up front.

But it usually creates more drag later:

- emergency policy reactions

- procurement bottlenecks

- duplicated tooling

- fragmented ownership

- board anxiety

- rework across functions

- incident reviews

- regulatory discomfort

The second path can feel more deliberate at first. But once established, it compounds speed. Teams know what is allowed. Risk thresholds are clearer. Review pathways are more predictable. Change is monitored. Control functions are more comfortable saying yes. That is what scalable adoption looks like.

Why CIOs, CTOs, and transformation leaders should care

The strongest message for technology leaders is not “we need more oversight.” It is “we need less ambiguity.”

Most CIOs and CTOs do not want a governance programme that intervenes late and slows everything down. They want a model that lets useful AI move through the organisation without every initiative becoming a one-off debate. That only happens when governance is operational.

That means:

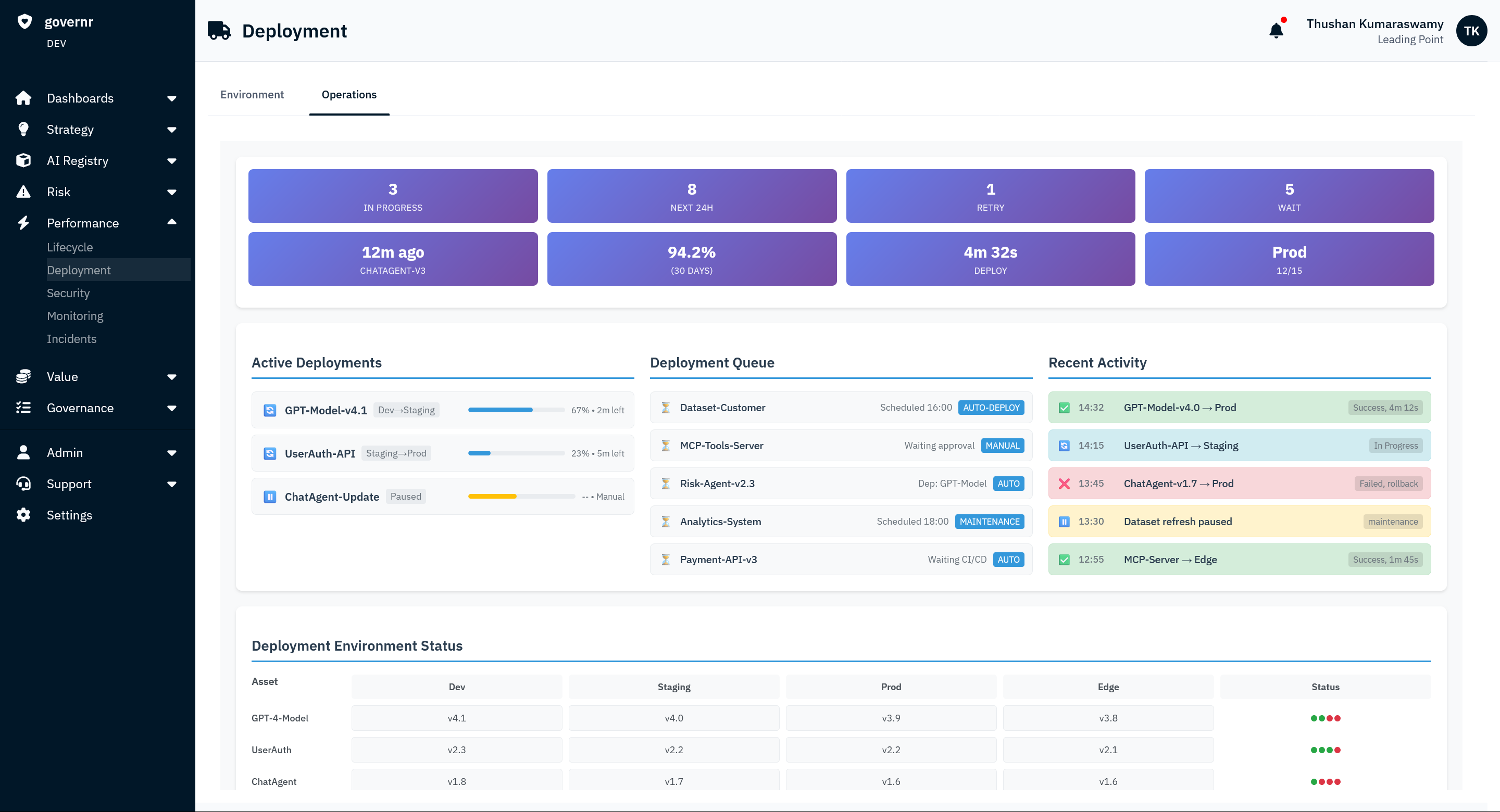

- a live AI inventory

- usable AI risk classification

- defined ownership

- clear review thresholds

- control expectations by use case

- visibility into third-party AI

- change detection across the environment

- evidence that is usable for board, audit, and regulator questions

When these pieces exist, governance stops functioning as a defensive layer added after the fact. It becomes part of how the business scales AI responsibly.

Why weak governance creates more “no” than strong governance

A lot of firms still think governance slows adoption because their current governance process does exactly that. But that is not because governance exists. It is because the model is immature.

Where the organisation cannot answer simple questions like:

- What AI is live?

- Who owns it?

- What data does it touch?

- What changed?

- Which controls are in place?

the only safe organisational response is hesitation. That is what gets interpreted as resistance. In reality, it is unresolved uncertainty. The firms that say yes faster are usually not the firms with the loosest standards. They are the firms with the clearest line of sight into what is being approved.

AI governance is becoming the infrastructure for scale

The next phase of enterprise AI will not be won by firms that merely experiment the fastest.

It will be won by firms that can scale use cases across teams, vendors, systems, and data environments without losing control of the estate. That requires more than enthusiasm. It requires a governance model the business can actually use.

The best organisations are already shifting their language. They are moving away from seeing governance as the group that intervenes late and toward seeing it as the function that makes safe AI adoption possible at enterprise scale. That is the right framing, because in most organisations, the real brake on AI is not governance.

It is the absence of a governance model strong enough to support confidence. And confidence is what allows firms to move.