AI Supply Chain Attacks: How They Happen and How to Defend Against Them

AI Supply Chain Attacks: How They Happen, Where They Land, and How to Defend

Most teams still think about AI risk in the wrong place. They focus on outputs. Hallucinations. Unsafe responses. Prompt injection. Model misbehaviour. Those are real issues. But some of the most serious AI compromises happen long before the model ever produces an answer. They happen upstream, in the supply chain.

That is what makes AI supply chain attacks so dangerous. Attackers do not need to break your model directly if they can compromise what your model depends on. A poisoned dataset, a malicious dependency, an unsafe model file, a compromised CI workflow, or a silently changing third-party AI service can do the job just as effectively.

In many cases, more effectively. Because once a poisoned upstream component is trusted, the downstream AI system inherits the damage.

Why AI supply chain attacks matter more than most teams realise

Traditional software supply chain security is already difficult. AI makes it harder.

Why? Because AI systems rely on far more opaque and fast-moving assets than standard applications do. You are not just trusting code. You are trusting training data, model weights, checkpoints, prompts, adapters, retrieval corpora, evaluation harnesses, registries, conversion tools, and hosted vendor services.

Many of those assets are hard to inspect. Some are impossible to meaningfully review line by line. Some can execute code when loaded. Some change quietly over time. That combination creates a serious control problem.

If you cannot clearly answer what AI assets it depends on, where they came from, what changed, and what trust assumptions they carry, then your AI risk posture is weaker than it looks.

What is an AI supply chain attack?

An AI supply chain attack is an attack on something upstream in the AI lifecycle so that the downstream AI system inherits the compromise.

That upstream component could be:

- a package in your AI dependency chain

- a model downloaded from a public hub

- a dataset used for training or fine-tuning

- a checkpoint loaded into a serving stack

- a CI/CD workflow that publishes model artifacts

- a third-party AI platform or embedded vendor feature

The attacker wins by corrupting something your AI system already trusts. That is the core idea.

Where AI supply chain attacks usually start

In practice, AI supply chain attacks tend to cluster around a few predictable choke points.

1. Dependencies in AI tooling

This is the familiar route, but it is still one of the most effective. AI teams rely heavily on open source packages, model serving libraries, tokenisers, CUDA tooling, parsers, and framework dependencies. Attackers know that. So they target package registries, naming conventions, CI caches, publish credentials, and maintainer workflows.

A single compromised dependency can lead to:

- secret theft

- environment variable exfiltration

- malicious persistence

- tampered builds

- downstream release compromise

This gets even worse in AI environments because those systems often have privileged access to training data, model artifacts, cloud storage, and production infrastructure.

2. Malicious model files and unsafe checkpoint loading

This is where AI supply chain risk becomes much more specific and much more dangerous. Many teams still treat model files like passive assets. They are not.

In common ML workflows, loading a model checkpoint can trigger code execution if the format or loading method is unsafe. That means a model download is not just a file transfer. It can also be an execution path. That changes the risk entirely.

A malicious model artifact can turn an ordinary model load into:

- arbitrary code execution

- credential theft

- host compromise

- persistence inside training or inference infrastructure

This is one of the clearest examples of why AI supply chain security is not just software supply chain security with different branding. The model itself can become the attack vector.

3. Training data poisoning and model backdoors

Not every attack is designed to fire immediately. Some are designed to wait.

Data poisoning attacks introduce manipulated samples into a dataset so the trained model learns the wrong behaviour. In some cases that means degraded performance or hidden bias. In more serious cases, it means a backdoor: the model behaves normally until a specific trigger appears, then fails in a way the attacker intended.

That is especially important for teams using:

- externally sourced training data

- fine-tuning pipelines

- retrieval corpora in RAG systems

- user feedback loops

- continuously updated knowledge bases

This is one of the least intuitive parts of AI supply chain risk. The poisoned input is not always code. Sometimes it is data. Sometimes it is content. Sometimes it is runtime retrieval material that quietly shapes model behaviour after deployment.

4. Compromised CI/CD and MLOps workflows

If an attacker reaches your AI build and release pipeline, the blast radius expands quickly.

MLOps platforms, model registries, artifact stores, experiment tracking systems, and CI/CD workflows all sit in high-trust positions. A compromise there can let an attacker tamper with evaluation logic, publish poisoned artifacts, leak secrets, or alter what gets promoted into production.

And if your organisation cannot prove what was built, from which inputs, by which workflow, and under what approvals, then incident response becomes much harder. That is not just a technical problem. It is a governance problem.

5. Third-party AI vendors and hosted AI services

A growing share of AI supply chain risk now sits outside the organisation. Teams increasingly depend on external model providers, AI-enabled SaaS platforms, fine-tuning vendors, model hubs, inference APIs, and cloud AI services. Each one extends the supply chain. Each one adds another layer of trust.

The danger is not only vendor compromise. It is also silent change.

A provider may roll out a new model version, enable a new AI feature, change a prompt layer, alter a retrieval process, or swap underlying dependencies with limited customer visibility. That creates operational drift and weakens evidence. You may still be using the same vendor on paper while using a meaningfully different AI system in practice.

What real AI supply chain attacks look like

This is not hypothetical. Some of the clearest real-world patterns include:

- Dependency compromise in ML ecosystems: Attackers insert malicious packages into the dependency chain for major ML tooling, causing compromise during installation and giving them access to secrets, hosts, and privileged environments.

- CI and release workflow compromise: Attackers target build pipelines or publish credentials for popular AI libraries, allowing malicious releases to flow downstream to users who trust them.

- Malicious models and dataset loader scripts on model hubs: Researchers have shown that malicious artifacts can appear on major model hosting platforms, including models and dataset scripts designed for reconnaissance, credential theft, or remote control.

- Unsafe checkpoint loading in inference tooling: Security advisories continue to show that model checkpoints loaded with unsafe defaults can lead directly to remote code execution.

Taken together, these are not edge cases. They are a pattern.

Where the damage lands

When AI supply chain attacks succeed, the impact usually lands in five places.

1. Confidentiality: Secrets, credentials, prompts, model artifacts, and sensitive data can be stolen from training and inference environments.

2. Integrity: Datasets, weights, evaluation logic, or runtime behaviour can be altered so the model produces manipulated outcomes without obvious signs of compromise.

3. Availability: Compromised dependencies or malicious artifacts can break training pipelines, disrupt inference, or force emergency rollback.

4. Safety and operational reliability: A poisoned or backdoored model may behave normally in testing but fail under attacker-controlled conditions.

5. Governance and auditability: Even when the immediate damage is unclear, weak lineage and weak provenance make it much harder to answer basic questions such as what changed, when it changed, who approved it, and what systems are affected.

That last category matters more than many teams admit. If you cannot reconstruct the chain of trust, you cannot prove control.

Why AI supply chain security is now an executive issue

This is no longer just a security engineering problem. AI supply chain risk cuts across technology, operations, risk, procurement, and governance. It affects how quickly an organisation can adopt AI, how confidently it can scale AI, and how defensibly it can explain that adoption to boards, regulators, customers, and auditors.

That is why this belongs in executive conversations. Not because every board needs to understand model serialisation formats, but because every leadership team needs to understand that AI systems inherit risk from what they trust upstream. If the organisation is moving fast on AI without visibility into that trust chain, then it is moving faster than its control model.

What security and risk teams should do first

The answer is not one silver bullet. It is a layered control model.

Start here:

1. Treat AI artifacts as first-class assets: Do not limit control to source code. Model files, datasets, prompts, adapters, retrieval corpora, and evaluation assets all need ownership, lineage, and lifecycle controls.

2. Reduce unsafe model loading paths: Treat third-party model files as untrusted by default. Prefer safer formats where possible. Isolate loading and conversion workflows. Do not let model import quietly become code execution.

3. Harden CI/CD and publishing workflows: Use identity-based publishing, short-lived credentials, signed artifacts, and clear provenance. Remove ambiguity around who built what and how it reached production.

4. Gate external data ingestion and retraining: Quarantine outside data before it enters curated corpora or retraining loops. Continuous learning without supply chain controls is a direct route to poisoning risk.

5. Monitor the right signals: Go beyond uptime and performance. Watch for model hash changes, anomalous outbound network activity during build or load steps, unusual secret access, and sudden changes in model behaviour after updates.

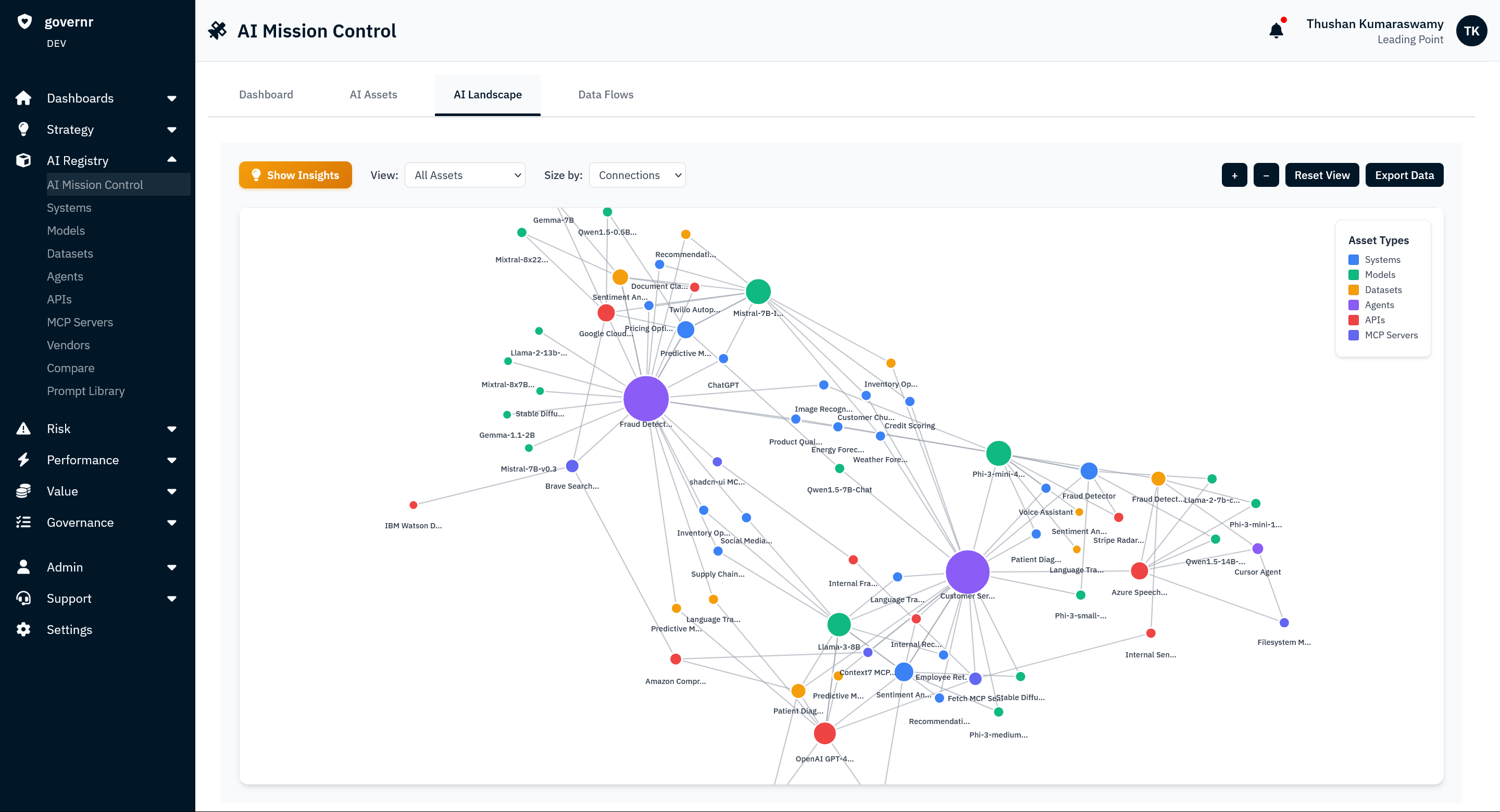

6. Build an AI bill of materials: If you cannot map the models, datasets, dependencies, vendors, prompts, and pipelines feeding production AI, you will struggle to contain incidents or prove control.

The real question leaders should be asking

Most teams ask: is the model safe? That is not the first question. The better question is: what does this AI system trust before it ever generates an output? That is where many of the real attack paths begin. And that is why AI supply chain security needs to be treated as a core part of AI governance, not a niche technical concern buried inside model engineering. Because if the upstream trust chain is compromised, everything downstream becomes harder to trust too.

FAQ

What is an AI supply chain attack?

An AI supply chain attack is a compromise of an upstream dependency, model, dataset, workflow, or vendor that causes a downstream AI system to inherit malicious behaviour, code execution risk, integrity loss, or data exposure.

Are AI supply chain attacks different from software supply chain attacks?

Yes. They include traditional dependency and CI/CD risks, but also AI-specific assets such as model checkpoints, training data, retrieval corpora, prompts, adapters, and model hubs.

Why are model files risky?

Because some model formats or loading paths can execute code when the artifact is loaded. That means a model file can function as an executable supply chain input, not just passive data.

Can training data be part of the AI supply chain?

Yes. Poisoned training data, fine-tuning data, or retrieval content can alter model behaviour, introduce backdoors, or degrade system integrity.

What are the best defences against AI supply chain attacks?

The strongest defences include artifact provenance, integrity checks, safer model formats, hardened CI/CD, controlled data ingestion, continuous monitoring, and AI-specific asset inventory.