Inside the Machine: The CEO Playbook for Taking Control of AI in Regulated Enterprises

AI is no longer a “big bet” initiative you can isolate in a lab. In regulated enterprises, AI is becoming a daily-change system that expands through routine behaviour, developer workflows, and vendor upgrades. The constraint is not interest or budget anymore. It is control.

That is the core finding from our new briefing, Inside the Machine: How Regulated Sector Enterprises Are Taking Control of AI, based on 25+ interviews across regulated firms in the US and UK (financial services, healthcare, and defence).

This post distils the executive takeaways: what’s actually breaking, what “good” looks like operationally, and the 90-day plan CEOs (and other leaders) can use to prove they are steering AI, not discovering it under pressure.

Key takeaway #1: “AI governance” is not the problem. Operating control is.

Most regulated firms are not failing on intent. They are failing on operating capability. Policy decks and periodic reviews were built for systems that change slowly. AI does the opposite: it changes continuously.

If you want to know whether you have real AI control (not just AI governance theatre), use the report’s operational questions:

- Coverage: Do we know where AI is used in regulated processes?

- Change: Can we detect material changes inside one business day?

- Accountability: When something changes, can we route it to an owner immediately?

- Evidence: Can we produce an audit trail without a multi-week scramble?

- Regulatory readiness: Can we produce Tier 1 evidence within 72 hours when asked?

If those answers are not consistently “yes,” you do not have a governance gap. You have a control gap.

Key takeaway #2: AI sprawl has three feeders. Governing one does not cover the others.

Executives often try to “solve AI risk” by tackling one obvious source. That is the failure mode. AI sprawl enters through three feeders, each needing different signals:

- Employees (Shadow AI): AI use that appears through day-to-day employee behaviour, outside approved tooling, identity controls, and retention policies. It often lives in personal accounts, browser extensions, and “quick win” workflows that never pass through IT, security, or risk review.

What it looks like in practice (examples):

- A finance analyst pastes earnings guidance or customer pricing into a personal ChatGPT account to draft an executive summary.

- A customer support team uses an unapproved AI note-taker that records calls and sends transcripts to a third party.

- An HR partner uses an AI assistant to rewrite performance reviews, unintentionally sharing sensitive employee data.

- A sales team installs an AI email extension that auto-generates replies inside Gmail or Outlook without org-level logging.

- An employee uses a free AI image tool to create marketing assets, uploading internal product screenshots or roadmap slides.

- Builders (Developer layer): AI use introduced by engineers and technical teams through prototypes, internal tools, and application features, where experimentation becomes production behaviour before controls, ownership, monitoring, and evidence are established. This is where “one team’s hack” becomes a dependency for a regulated workflow.

What it looks like in practice (examples):

- A team builds an internal RAG bot over SharePoint/Confluence and quietly expands its permissions to “make it more useful.”

- Developers add an LLM API call into a claims triage workflow, but prompt changes and model swaps are not change-controlled.

- A product team ships an agent that can trigger downstream actions (tickets, refunds, access changes) with only basic guardrails.

- A data scientist deploys an AI scoring model into underwriting, but monitoring for drift and bias is informal and ad hoc.

- A developer uses a code assistant that pulls from proprietary repos, but the org cannot prove whether code or secrets were exposed.

- Third-party AI (Vendor-embedded): AI capabilities that enter your environment through software you already buy, as vendors embed copilots, agents, summarizers, automated decisioning, and AI-driven defaults. Adoption happens via upgrades, feature toggles, and licensing changes, not new procurement events.

What it looks like in practice:

- Your CRM, ERP, HRIS, or ticketing platform turns on “AI assistant” features after a version update.

- A cloud provider adds new AI services that integrate by default into existing logging, analytics, or data pipelines.

- A SaaS vendor introduces “smart” recommendations that influence regulated decisions (credit, claims, eligibility) without a separate risk review.

- A collaboration tool adds meeting summarization; recordings and transcripts are processed by the vendor’s AI stack.

- A security or monitoring tool adds automated triage with generative summaries and suggested actions that operators start following.

The implication for leadership is straightforward: oversight that covers only one feeder is not oversight. The enterprise needs one truth across employees, builders, and vendors.

Key takeaway #3: The unit of risk is shifting from models to workflows and agents

Many firms still think in terms of “model governance.” The report makes the shift explicit: as AI becomes agentic, the risk increasingly sits in workflows, permissions, connectors, integrations, and change velocity, not just model quality.

This matters because it changes what you must monitor:

- New connectors and permissions that expand data access

- Agent tool use and autonomy changes

- Prompt and configuration drift

- Vendor AI enablement and default data-sharing settings

If you cannot detect privilege drift in one business day, you will discover it during an incident.

Key takeaway #4: 2026 regulation is converging on provable control and evidence on demand

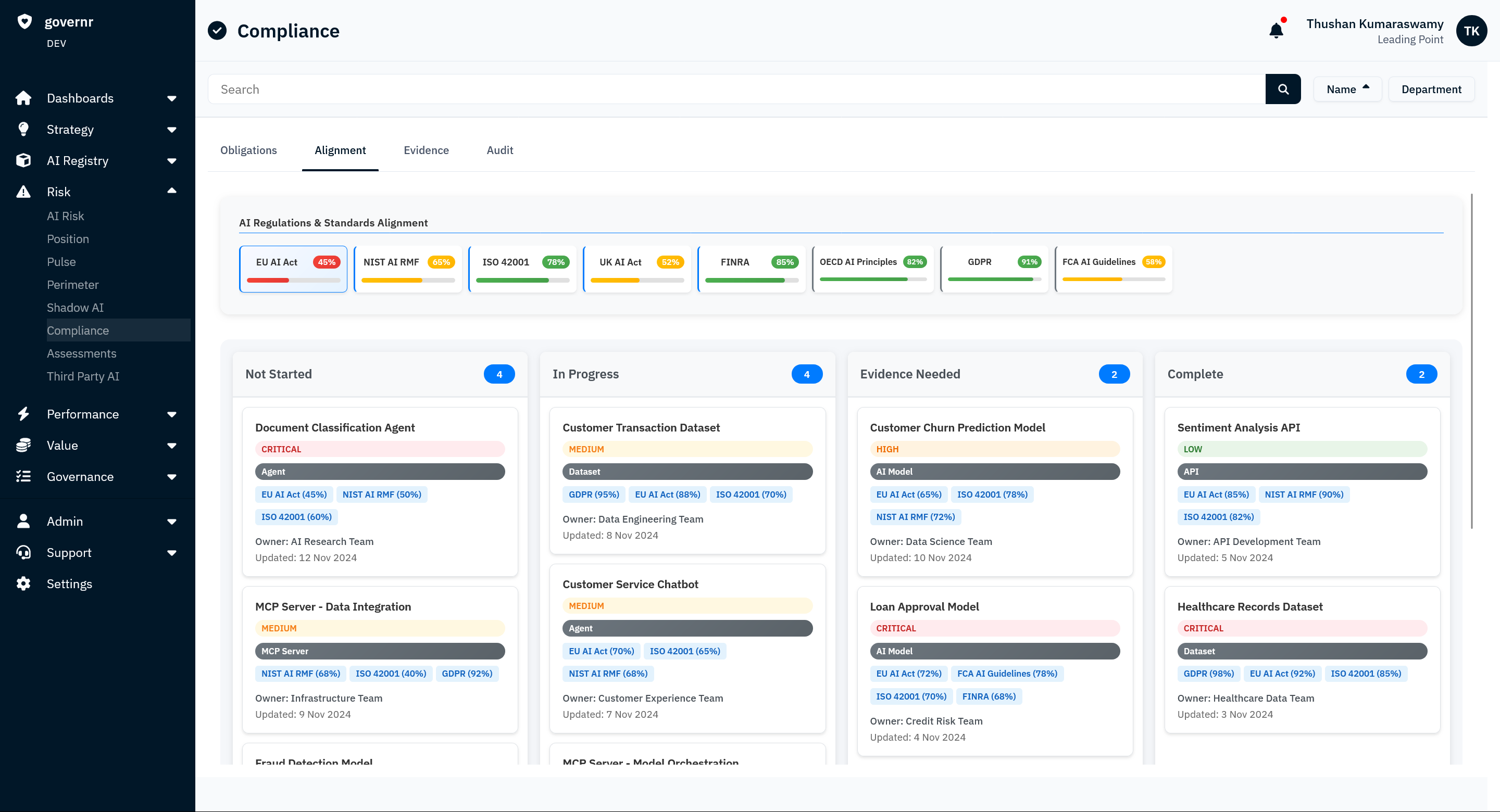

Different regimes vary in specifics, but expectations are converging on the same proof, and you can see it clearly in places like the EU AI Act (risk-tiered obligations) and FINRA Rule 3110 (supervision and evidencing oversight): regulators want to know you can identify, assign, monitor, and produce evidence fast.

That proof consistently looks like:

- A current inventory of AI in use (including embedded and third-party AI), so you can classify what is in scope and what risk tier it falls into.

- Accountable ownership, so every AI-enabled workflow has a named operator with responsibility for controls and outcomes.

- Evidence that stays current as systems change, not a point-in-time pack that goes stale after the next model update, prompt tweak, or feature toggle.

- Demonstrable control over permissions, data access, and vendor change, including who can connect to what, what data is exposed, and what changed since the last review.

This is why periodic review fails: if you cannot produce current evidence quickly, control is assumed, not proven.

Key takeaway #5: The AI “control plane,” or “controller,” is the operating layer leaders actually need

We define this as continuous oversight that answers, every day:

- what AI is running

- who owns it

- what it can access

- what changed

- what controls apply

Crucially, it is not a committee. It is an operating capability: discover, detect change, route to owners, apply tiered controls, and keep evidence current as a byproduct of day-to-day operations.

One line from the report captures the bar: If you cannot detect material AI change within one business day, you do not have control.

The 90-day CEO/COO/CTO plan to prove control

Most leadership teams don’t need more “AI governance.” They need a repeatable operating rhythm that keeps up with systems that change daily. The sequence is simple: visibility (what’s running), ownership (who is accountable), monitoring (what changed), then evidence (can you prove it on demand). The plan below turns that into a 90-day execution path, with KPIs you can run like any other critical control system.

Now (0–30 days): establish visibility and ownership

Goal: create a system of record and stop guessing.

Do this:

- Define scope across the three feeders: employees (shadow AI), builders (developer layer), and vendors (embedded AI features and upgrades).

- Stand up the AI system of record: require minimum metadata for everything in scope: owner, purpose, workflow, data classes touched, integrations/connectors, and model or vendor.

- Baseline what’s actually happening: separate sanctioned vs unsanctioned usage, and identify the AI-enabled workflows touching regulated activity.

- Turn on change signals: vendor AI enablement, new agents, new connectors, permission expansions, prompt/model changes where feasible.

- Update procurement and renewals: add AI disclosure, change notification, and evidence expectations as standard clauses.

- Run the executive drill on the top 10 regulated processes (below).

KPIs to track:

- Owner coverage: % of business units with a named AI owner (target: 100%)

- Inventory completeness: % of in-scope AI assets with complete minimum metadata (target: 80%+)

- Discovery coverage: % of AI tools and AI-enabled features in use captured on record

- Monitoring readiness: % of critical systems with change signals enabled (target: 100%)

- Top-10 readiness: % of top 10 regulated processes with verified AI inventory and accountable owner (target: 100%)

Next (30–90 days): activate daily monitoring and tiered controls

Goal: make control real, not aspirational.

Do this:

- Stand up daily triage: review detected changes, classify materiality, route to the accountable owner, and apply controls or log exceptions.

- Operationalize change control: define what requires approval, what gets tested, what must be documented, and what evidence is retained.

- Harden third-party AI oversight: map critical vendors to regulated workflows, require change notices, and verify defaults (data handling, retention, model usage, sub-processors where relevant).

- Expand from top 10 to highest risk coverage: prioritize workflows that touch regulated decisions, customer communications, sensitive data, and automated actions.

KPIs to track:

- Time to detect material change: median detection time (target: within 1 business day)

- Change accountability: % of material changes with recorded owner acknowledgement and disposition

- Evidence coverage: % of material changes with approval record and retained evidence

- Vendor posture: % of critical vendors with verified AI settings and change-notification pathways

- Coverage: % of regulated processes covered by the control plane (tracked weekly)

Later (90–180 days): move from discovery to assurance

Goal: evidence packs on demand, board-ready reporting, measurable drift reduction.

KPIs to track:

- Evidence freshness: days since last verification by critical workflow

- Drift rate and response: # of high-risk drift events per month and median time to remediate

- Control effectiveness: % of control tests passed with documented remediation and retest

The executive drill: the fastest way to find a control gap

If you do one thing this week, do this. Pick your top 10 regulated processes and answer four questions for each:

- Is AI involved (employee use, developer-built, or vendor-embedded)?

- Who owns it (named accountable owner, not a shared mailbox)?

- What changed recently (last material change, including permissions/connectors/features)?

- Can you produce evidence (inventory entry + controls + logs/records) quickly?

If those answers take longer than a day to assemble, you don’t have an “awareness” problem. You have a control gap that will surface later in an exam, incident, customer escalation, or board request for proof.

Read the full report

If you want the full operating model, telemetry signals by feeder (employees/builders/vendors), and the complete KPI plan, read the report here:

Inside the Machine: How Regulated Sector Enterprises Are Taking Control of AI

FAQ for CEOs and other leaders

What counts as “AI in use” in a regulated enterprise?

Anything that meaningfully influences a decision, communication, workflow, or data access, including employee copilots, internal LLM tools, agentic workflows, AI features embedded in SaaS platforms, and third-party models accessed via APIs.

Why do “AI inventories” go stale so quickly?

Because AI changes without a new procurement event. Vendors ship AI features in upgrades, builders tweak prompts and swap models, and employees adopt new tools overnight. If you only review quarterly, your inventory is out of date by design.

What is a “material AI change” that should trigger review?

Examples include: a new model or model version, prompt changes that affect outputs, new connectors/integrations, permission expansions, new data sources, vendor feature toggles that enable AI, changes to retention or logging, and any new automated action an agent can take.

Where do regulated firms usually start, practically?

Start with the top 10 regulated processes and the highest-risk vendors. Build the system of record, assign owners, and turn on change signals. Then expand coverage outward from regulated workflows, not from tool lists.

How do you avoid slowing down innovation?

By tiering controls based on risk and focusing review on material change. You do not need a heavyweight process for every experiment. You need fast detection, clear ownership, and escalation only when risk thresholds are crossed.