EU AI Act summary: Executive briefing on risk tiers, obligations, and what boards must do now

The EU AI Act is the EU’s risk-based AI regulation. It bans certain harmful AI uses, imposes strict controls on “high-risk” AI in sensitive domains (like hiring, credit, education, border control, and essential services), and requires transparency for certain AI interactions and synthetic content. It rolls out in phases from 2024 to 2027, with a major compliance step on 02 August 2026.

Who this is for

This briefing is for CEOs, executive leadership, and heads of risk who need a clear view of what’s live now, what comes next, and what “AI Act compliance” will require across product, procurement, and operational risk.

Why boards should care

This is not a narrow technical rule. It is a market-access and assurance regime that will show up first in procurement and customer due diligence, then in enforcement. It also matters to non-EU organisations in cases where AI outputs are used in the EU, which brings many UK and global vendors and buyers into scope.

The practical board takeaway is simple: you will be expected to demonstrate that you know what AI is running, how it is classified, who owns it, and what evidence exists to support safe deployment.

Key dates:

- 01 Aug 2024: Entered into force

- 02 Feb 2025: Prohibited practices banned + AI literacy obligations apply

- 02 Aug 2025: General-purpose AI (GPAI) obligations apply

- 02 Aug 2026: Most “high-risk” obligations apply (Annex III) + transparency rules + sandboxes and support measures expected

- 02 Aug 2027: Longer runway items apply (notably certain AI embedded in regulated products, plus legacy GPAI timing)

Planning note: there is active policy work that could adjust some deadlines, but a prudent plan treats 02 August 2026 as the anchor date. Even if timelines move, the hard work is dominated by inventory, evidence, and operating discipline, not last-minute legal interpretation.

EU AI Act risk categories

- Prohibited practices (banned) Certain AI uses are prohibited. In practice, treat this as a “stop list” and monitoring problem. Boards should require a formal confirmation that prohibited uses are not present anywhere in the organisation, including pilots, and that key suppliers are not embedding prohibited functionality into products used by the business.

- High-risk AI systems (strict controls in sensitive domains) “High-risk” is mainly driven by how AI is used. Common high-risk areas include employment decisions, education access, credit and essential services, border control, and certain biometric uses. Practical meaning: if you provide or deploy high-risk AI, you need a compliance operating model that can stand up to regulators and buyer scrutiny. This is the heavy-lift part of the Act.

- Transparency-risk systems (disclosure and content marking) If people are interacting with AI (for example, a chatbot) or are exposed to synthetic or manipulated content in certain contexts, transparency obligations apply.

Practical meaning: disclosures and detectable marking become product requirements, not legal footnotes.

- Other systems (lighter AI Act-specific requirements) Many systems fall outside the main buckets. But the risk tier can change quickly if the use case changes, especially when tools get adopted downstream into sensitive workflows.

Board take: risk classification is not just about the model. It is about the system and its use context. That is why product, legal, procurement, and risk functions need one shared classification method and one shared evidence standard.

What’s already live (and why it matters)

AI literacy is already in force

AI literacy is not a “nice to have.” Organisations must be able to show that relevant staff and operators understand AI risks and requirements in the context of their work. Boards should treat this as measurable: coverage by role, refresh cycles, and evidence that training is sustained.

GPAI obligations are already in force (since 02 Aug 2025)

If you build, fine-tune, or commercialise general-purpose AI models, key governance and transparency duties are already live. If you buy or embed GPAI models, supplier evidence becomes a near-term procurement requirement. Waiting until 2026 will put you behind customer expectations.

Prohibited practices require active monitoring, not just policy

These are not abstract principles. The prohibited category includes concrete practices. The board-level control is simple: confirm none exist, and put monitoring around the areas where drift is most likely (for example, biometrics-related features, workplace use cases, or vendor updates that quietly expand scope).

What changes in August 2026 (the “enterprise deadline”)

August 2026 is the near-term inflection point because the high-risk AI regime comes into force for most Annex III use cases. This is the point where organisations feel the full operational weight: documentation, logging, human oversight, monitoring, and incident handling become mandatory expectations, and buyers begin demanding proof as part of vendor risk management.

In practical terms, high-risk readiness means you can do five things reliably:

- Maintain documentation that is complete and current

- Produce logs and audit trails where required

- Show human oversight is real (not ceremonial)

- Monitor performance and compliance over time

- Escalate and manage serious issues like a control system, not a scramble

Why “AI Act compliance” becomes a procurement issue before it becomes an enforcement issue

Even before regulators show up, customers and procurement teams will. Buyers have their own deployer obligations (monitoring, oversight, log retention where applicable, incident escalation). They cannot meet those obligations if a supplier cannot provide the necessary documentation, instructions, logging capability, and an incident channel. That is why “compliance-ready evidence” becomes a commercial differentiator.

What boards should require now (not later)

You do not need to turn the board into AI lawyers. You need a short list of evidence-backed questions that force operational maturity.

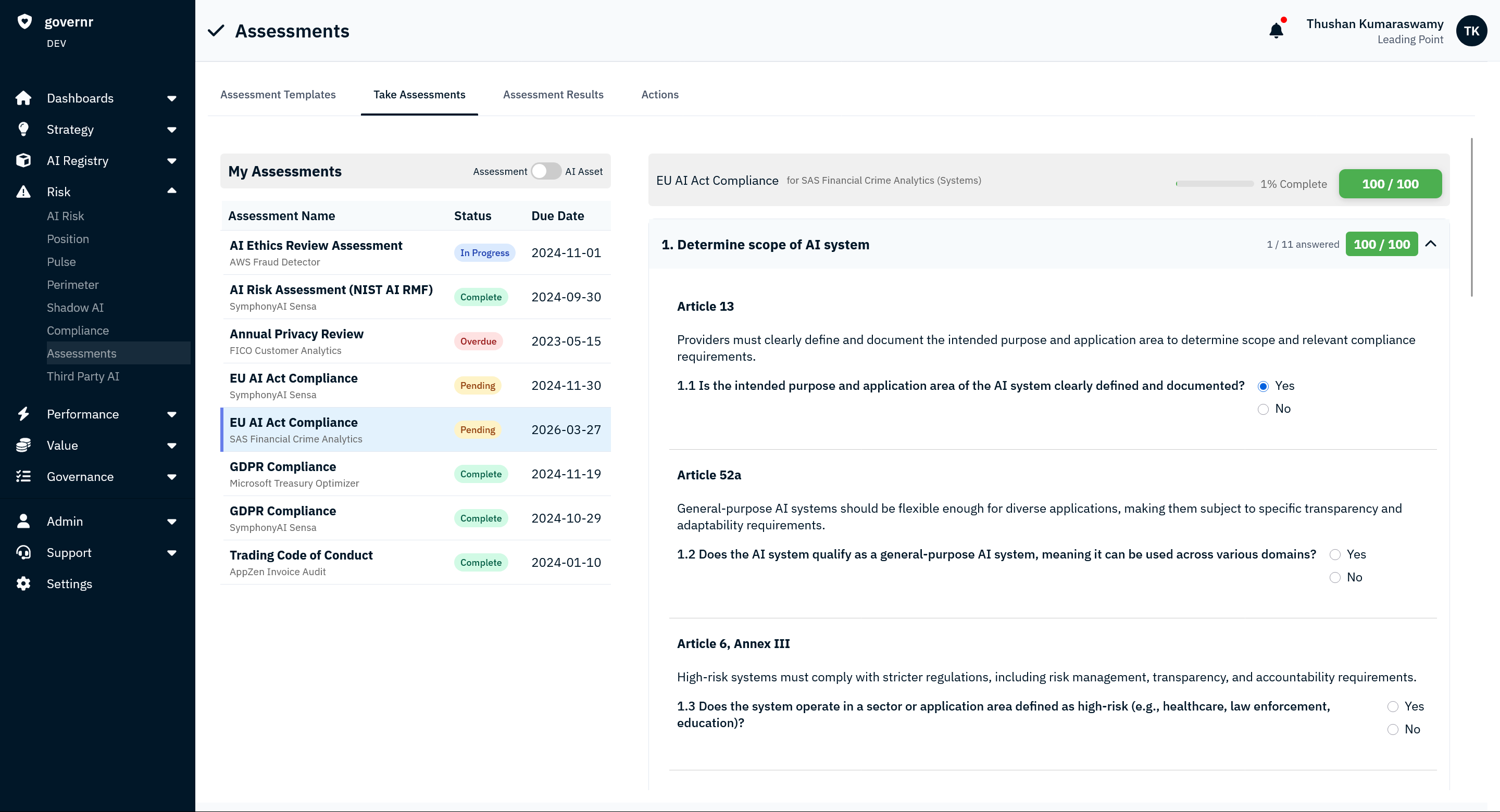

- A complete AI inventory mapped to ownership and EU exposure Do we have a complete inventory across internal AI and third-party AI? For each system, do we know who owns it, and whether outputs are used in the EU?

- A single classification method, applied consistently Can we show which systems are potentially prohibited, high-risk, transparency-risk, or other, based on how they are used? Do we have a documented rationale for each material system?

- An evidence standard for what “compliant” means What artefacts must exist per system to support oversight and buyer due diligence? At minimum, boards should expect documented ownership, intended use, controls, and an escalation path. For systems trending toward high-risk, boards should expect documentation and logging maturity as a product requirement.

- Procurement controls that force supplier transparency Are supplier contracts and renewals requiring AI disclosure, risk classification support, and evidence commitments? If you embed GPAI models, are you requiring supplier documentation and governance signals now?

- A measurable AI literacy program Do we have role-based AI literacy training coverage across builders, operators, procurement, sales, and customer-facing teams, with refresh cycles and proof?

A practical board agenda (next two meetings)

- Confirm the stop list: attestation that prohibited practices are not used anywhere, including pilots and vendor tools.

- Approve the first AI risk register: inventory plus classification decisions and named owners.

- Set the evidence bar: what must be produced on demand for each material system.

- Direct procurement: supplier AI disclosures and evidence commitments before renewal cycles.

- Review readiness for 02 Aug 2026: gaps in logging, documentation, oversight, and monitoring for any systems likely to fall into high-risk or transparency obligations.

Conclusion

The EU AI Act is quickly becoming a market-access filter. Organisations that can show clear classification, ownership, and evidence on demand will deploy AI faster with less friction. The biggest failures won’t come from deliberate misuse. They’ll come from blind spots: unknown AI in workflows, unclear ownership, and evidence that can’t be reproduced when it matters.

FAQ: EU AI regulation explained

What is the EU AI Act?

A risk-based EU regulation that bans certain AI uses, imposes strict controls on high-risk AI in sensitive domains, and requires transparency for certain AI interactions and synthetic content.

What are the EU AI Act risk categories?

Prohibited practices (banned), high-risk AI systems (strict controls), transparency-risk systems (disclosure and marking), and other systems with lighter AI Act-specific requirements depending on use context.

When do high-risk requirements matter most?

02 August 2026 is the key planning date for most high-risk AI systems listed in Annex III.

Does it apply to non-EU companies?

Yes, including where AI outputs are used in the EU, which brings many UK, US and global organisations into scope.

What should boards demand first?

A complete AI inventory mapped to owners and risk tier, plus a defined evidence standard that procurement and product teams must meet.