Governing Agentic AI: A Different Beast

Governing Agentic AI: A Different Beast

Are you governing agentic AI like it is just another chatbot?

That is the mistake many firms are about to make.

Agents are different. Not because the underlying models are mystical, and not because the market has settled on one clean definition. They are different because the control problem changes.

With a traditional AI system, the primary governance question is often about the output. Was it accurate? Was it fair? Was it safe? Was it appropriate for the use case? With an agentic system, that is no longer enough.

Now the question becomes:

- what is the system allowed to do

- what tools can it call

- what systems can it access

- what chain of actions can it trigger

- where are the hard boundaries

- what evidence exists

- who can interrupt it when needed

That is a different beast.

Why agentic AI requires a different governance model

A lot of current AI governance frameworks were built around a relatively stable structure:

input → model → output → human review or use

That structure does not disappear. But with agentic AI, it stops being sufficient.

An agent may interpret a goal, choose steps, retrieve data, call tools, interact with enterprise systems, hand off to other services, and take actions with limited human involvement. That means the governance issue is no longer just model quality. It is delegated action inside a live operational environment.

This is why agentic AI governance cannot be treated as a small extension of standard model oversight.

The risk surface expands into:

- permission scope

- tool access

- workflow chaining

- runtime supervision

- escalation logic

- intervention rights

- environment drift

- third-party toolchain dependencies

That is a much broader governance challenge.

The key shift: from output risk to action risk

For many AI systems, organisations are still focused on output risks such as hallucination, inaccuracy, bias, or unsafe content. Those issues still matter for agents. But they are not the full picture. The bigger issue is action risk.

An agent that can call systems, modify records, retrieve data, send information, trigger tasks, or chain decisions across tools has a different exposure profile from a model that simply returns text.

The governance question becomes:

- what authority was delegated

- whether the authority is bounded properly

- how runtime behaviour is supervised

- how misuse or drift is detected

- how the organisation proves that controls remain effective over time

That is where many firms are still underprepared.

Why agent traceability is useful, but not enough

One of the more promising aspects of AI agent governance is that agents can be highly observable in some environments: Inputs can be logged. Outputs can be recorded. Tool calls can be captured. Sequences can be replayed. Decision traces can sometimes be reconstructed.

That is useful. It may even make certain types of review easier than many teams expect. But traceability is not the same thing as control. A perfect log of a bad action is still evidence of failure. What matters is not just whether the organisation can explain what happened after the fact. It is whether the agent was operating inside proper boundaries in the first place.

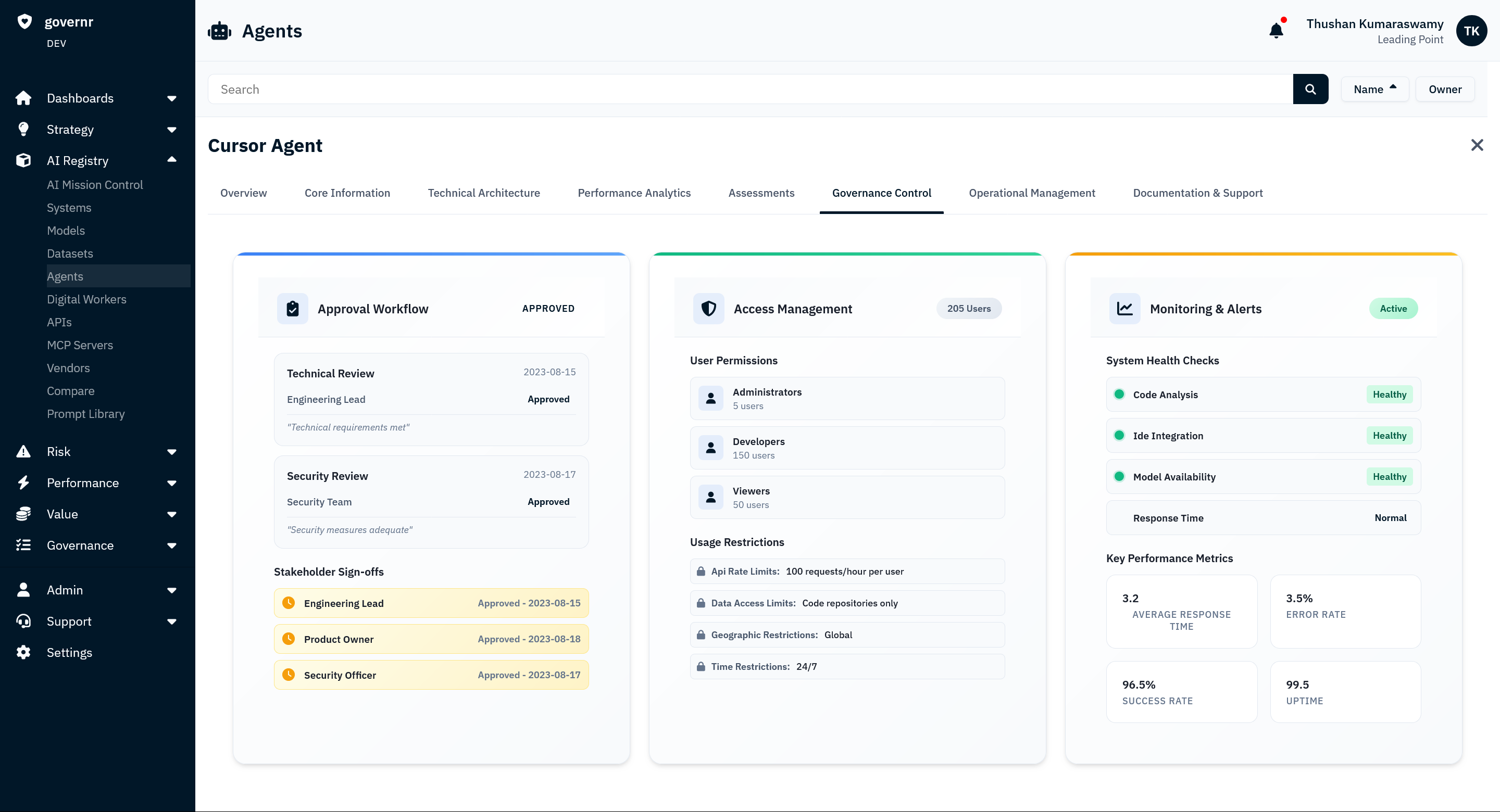

The five controls that matter most for agentic AI

If organisations want a practical model for governing AI agents, five control areas matter immediately.

1. Permission boundaries

What systems, tools, and data sources can the agent access? Under what conditions? Using whose authority?

2. Action authority

What can the agent actually do? Read? Write? Escalate? Execute? Purchase? Modify? Send? Delete?

3. Escalation thresholds

At what point must the agent pause, request human approval, or hand off to a supervisor?

4. Runtime supervision

Who can observe the agent while it operates? What alerts exist? What intervention rights exist? What exceptions are visible in real time?

5. Change management

How does the organisation detect changes in the agent’s capabilities, permissions, toolchain, or connected systems?

These are not secondary questions. They are the core of the supervisory model.

Why MCP and external toolchains increase the pressure

This gets more urgent as the agent ecosystem becomes more connected. Protocols and frameworks that let agents interact with external tools, services, and resources are making agentic workflows far more useful. They are also creating a much larger control surface.

Cloudflare recently highlighted the need for least-privilege controls around MCP server access and agent interaction with enterprise resources, specifically to reduce the risk of uncontrolled or rogue actions. That is exactly the direction serious governance needs to move in.

Because once an agent can call tools across the enterprise, the governance problem extends beyond the model. It includes:

- which servers the agent can reach

- which tools it can invoke

- how access is granted

- how permissions are bounded

- how external dependencies are governed

- how misuse and compromise are detected

This is why AI agent security and AI agent governance are increasingly converging.

Why old model governance logic is too narrow

A lot of organisations still think about this through the lens of classic model risk management. That is too narrow.

For agentic AI, the supervisory model needs to draw from multiple disciplines at once:

- AI governance

- access control

- operational risk

- workflow supervision

- incident management

- system safety engineering

- third-party dependency oversight

That is because the object being governed is not just a model. It is a semi-autonomous operational actor. And that has very different implications for control design.

The practical questions firms should ask before scaling agents

Before agentic systems are deployed broadly, organisations should be able to answer:

- What is this agent authorised to do?

- What systems can it touch?

- What tools can it call?

- What data can it access?

- What hard boundaries exist?

- What triggers escalation?

- What logs and evidence are captured?

- Who can stop it?

- How do we detect a material change in behaviour or permissions?

If those questions do not have clear answers, then the organisation does not yet have a mature governance model for agentic AI. It has enthusiasm. That is not the same thing.

The opportunity in front of firms now

The good news is that many firms are still early enough in the agentic AI adoption curve to define the supervisory model before the problem scales. That matters. Because the most expensive governance failures usually happen when organisations retrofit controls after adoption is already widespread.

With agents, firms still have a chance to establish the right architecture now:

- bounded authority

- monitored runtime behaviour

- human intervention pathways

- least-privilege access

- structured ownership

- change detection

- evidence that is usable for audit and regulatory review

That is where serious organisations should focus. The question is not whether agents are exciting. It is whether the firm has built the supervisory model required before an autonomous system is allowed to act across live business environments. That is the real governance question. And it is very different from governing a standard model deployment.